The Edge of Safe Rust

Horribly misusing Rust features to provide provable memory safety and tracing garbage collection for pointer soup.

2026-04-22

- Introduction

- Background

- Pointers in Rust without clear ownership is Very Hard

- Rust is actually just really good at Vec

- But Rust is pretty bad at safe circular references

- Generativity, or for<'a> is the coolest feature in Rust

- Dealing with "reachability" via tracing

- A sketch of a real, raw-pointer based GC

- Wrapping up -- The Bigger Picture

Errata: The talk slides and this blog post have had a lot of typos and minor code errors, more than I think I usually let slip through. I blame my complete lack of sleep! I'm fixing those as I find them to make sure they don't interfere with the points I'm trying to make. If you notice a discrepancy between the slides linked here and the slides in a video version (whenever that is posted), the slides here are more correct, so pretend I used those instead :) Sorry for doing this so late!

Introduction

This is the text version of a talk that I am giving for the first ever TokioConf on April 22. I'm writing this talk first as a mostly normal blog post because I find that writing these things as prose first helps me prepare.

I'm including a link to the slides here if you're watching my talk and would like to follow along, and whenever a video version is ready I will also link that.

When picking what to do for a talk, I'm always torn between two conflicting goals:

- Write about something that I know well and will be interesting to a large audience.

- Write about something that I have a unique perspective on, where I might be able to say things that few others can.

Neither of these is a bad strategy, but this talk / post will be almost entirely an example of the second goal. I have been recently living in a very strange corner of the Rust language, and I decided to base my talk around that experience.

This talk is going to be about something that might seem unusual for TokioConf, it's going to be about, at least on the surface, garbage collection and VMs in safe Rust. Since I've decided on the second approach to my talk, I have to confront the reality that I haven't actually written a lot of real networking code lately ¯\(ツ)/¯ (and any networking code I write is always video game centric, which is not really the same what Tokio is used for). More than that though, for the last few months I've been working with Kong (one of the sponsors of TokioConf!) on exactly this topic, they've contracted me to help them design small, isolated, safe Rust VMs for their networking rules engine. Therefore, I would argue, it must be at least a little on topic! But really, this is what I've been working on, this is what I have at least a chance of saying something interesting on, so this is what my talk is.

I hope you find it interesting!

I'm also assuming here no familiarity with any of my other work or talks or previous blog posts, so I will definitely cover some things I've talked about before.

Background

A long while ago I had an obsession with trying to integrate tracing garbage collection into safe Rust.

I was far from the only one who tried, but I may have been the only one who had two specific, very aggressive, core goals:

- Totally zero-cost pointers[1]This is subtle, but what I mean

is that

Gcpointers themselves would be transparent wrappers around*const TandCopy, and thus have exactly the same cost as a raw pointer, even if there is obviously (unavoidable) overhead in the allocation and collection parts. - Usable from completely safe Rust

I consider myself to largely have failed and determined that doing this

in the general case more or less required language support that didn't

exist yet. However I must not have failed that badly, because my best

attempt at solving this, gc-arena[2]Since being used by Ruffle, gc-arena has had lots of really core

contributions from Ruffle devs, I am not trying to take sole credit for it!

is now used by two projects that are used by millions of

people. Ruffle, the browser

based flash emulator, uses gc-arena for its ActionScript VM, and Fields

of Mistria is using (in its next release)

my own project fabricator, a

GameMaker replacement runtime which in turn also uses

gc-arena.[3]What actually happened is that the problem I did

manage solve (garbage collecting only when no Rust code is "active" within the

GC "context") is perfect for games, because games (and Ruffle is basically a

game engine) never really need to bother garbage collecting within a frame,

and code (other than special things like coroutines) never runs for more than

a single frame. This should be viewed as a wonderful example of "do you maybe

have a different problem that I can solve instead?"

This is obviously a brag, but more than that, I've realized that I, along with just a few other people, exist in an exceedingly strange ecosystem that barely anyone using Rust is even aware of. This is not unique to my effort (obviously I'm the most familiar with this one), but solving safe garbage collection in Rust almost universally requires type or lifetime tricks or coding patterns that look insane from the outside. You don't need to understand these examples, but look at this code written for a real, professional project[4]Fields of Mistria which I unabashedly adore and is a steal and you should definitely try you like cozy farming games. that is sold to real people for money...

// What the hell is `ctx`?

let methods = Gc::new(&ctx, DsGridMethods);

// What the hell is `unsize!`? Why does it have custom syntax?

let methods_ptr = gc_arena::unsize!(methods => dyn vm::UserDataMethods<'gc>);and this...

// What the hell is the `Rootable![_]` macro, it evaluates to a **type**?

// What the hell does '_ in a macro mean?

let methods = ctx.singleton::<Rootable![DsGridMethodsSingleton<'_>]>().0;

let ud = vm::UserData::new::<Rootable![DsGrid<'_>]>(&ctx, self);

ud.set_methods(&ctx, Some(methods));and this...

// Okay downcasting I understand, `downcast_write` must be like...

// `downcast_mut`, returning a `&mut T`? No? It's something else entirely that

// is impenetrable GC jargon? `&'gc barrier::Write<T>`?

let ds_list = DsList::downcast_write(&ctx, ud).unwrap();

// Again, what the hell is a barrier? Why does this have to be a macro? What

// syntax is this? WHY??

let inner = barrier::field!(ds_list, DsList, inner);

// Okay this part I basically understand, but what the hell is *unlocking*?

let mut vec = inner.unlock().borrow_mut();This is the kind of over-engineered "I'm too smart for my own good" code that might get you fired[5]or, somehow, invited to TokioConf if you didn't have an EXTREMELY good reason to write it this way.

And yet... even though code like this is nearly inscrutable, it still exists right now in two moderately popular, real world projects? I'm taking this is a sign that there is a poorly understood corner of the problem space for potential Rust programs, and that it is a good time to introduce more people to this space. There are a lot of interesting classes of programs that I feel ought to be simpler in safe Rust but are not at all right now, and even with the cognitive load of programming in what can feel like a strange pidgin language that just sort of mostly looks like Rust, it can even be the best viable option.

If I introduce more people to my personal hell, then maybe it won't be so bad?

Pointers in Rust without clear ownership is Very Hard

But don't worry, this post is not actually about the specific design

of gc-arena or most of this stuff I just showed you.[6]Start

here if you're actually interested.

The real point was to show you that handling garbage collection in Rust, at

least if you don't want unsafe {} and *const T everywhere in your user code,

is incredibly hard. This post is not even about garbage collection primarily,

what it's about is mostly dealing with a simple, frustrating truth that everyone

using Rust for long enough has run into countless times:

Rust is really bad at dealing with cyclic pointers.[7]Controversial, I know.

This is the central idea in this talk, an exploration of some of the ways to deal with this reality in current Rust.

Now, this post is going to contain a lot of what is effectively just whining about the state of Rust as a language, but it's important to put this in the proper context. When I complain, I'm complaining about what is achievable with a completely safe Rust interface, and really for the set of problems I'm going to describe, Rust is basically the only game in town if you want provable memory safety.

Programming languages, extremely broadly, fall into one of three camps in how they deal with pointers[8]What I mean by "pointer" is technically either a pointer or a reference in Rust parlance, whatever way that the language provides to "point" to a value from somewhere else. and pointer safety:

- The Ownership and

Dropcamp: Safe Rust, Swift, C++ in theory - The "Reachability" camp, AKA the "garbage collected" languages: Javascript, Go, Python, Ruby, Lua, OCaml, Java, Scheme, on and on... These languages always allow cyclic pointers, usually because they only have a single reference type and there is no such thing as "owning" or such a thing as "pointers" to break cycles.

- The "Just Trust Me Bro" camp, AKA most of the "systems programming" languages: Unsafe Rust, C, C++ in practice, anything where you may hear the words "use after free".

Almost everyone who reads #2 in that list will immediately think "the garbage collected languages", and they're not wrong, but before we go on I want to make one (possibly) controversial statement that will be very relevant in how we think about this solution space:

Ownership and Drop are also a form of garbage collection.

This is obviously a simple matter of definition, but I think this is the

definition that makes the most sense. Any language that doesn't force the

user to free memory manually must somehow free that memory automatically, and

the name "garbage collection" is usually used to describe any system that frees

memory automatically? I feel like this should not be thought of as strange at

all.[9]Calling Drop and Rc just another kind of garbage collection

seems to be a hobby horse of much of the GC literature, but I do agree with

it.

So with this lens, we can immediately see why Rust is bad at dealing with

circular pointers... Since (safe) Rust is memory safe and must prove that

pointers (references) are not dangling, and its only garbage collection system

is based on ownership, and cyclic pointers by definition do not have any clear

ownership relation, it must not be able to garbage collect them! So, either

all possibly cyclic pointers must live for exactly the same amount of time

(&'static T), or garbage collection is not automatic and thus cannot be proved

safe by the compiler (pointers must be raw pointers and freed manually).

Everyone who uses Rust finds out about this almost immediately.[10]and usually this discovery is derogatorily called "fighting the borrow checker" The only possibly new thing I'm introducing is how to think about this problem in relation to other languages: Rust has ownership and automatic drop, most other safe languages instead have a notion of reachability, and when an object is unreachable it is freed. Rust picked a garbage collection system that has "deterministic" drop at the expense of being able to deal with cycles through reachability, because this is what is most broadly useful in systems programming: it has deterministic performance, minimal runtime requirements, and it allows non-trivial or load-bearing behavior at the statically known point of drop.

Now, thinking about the projects that I've been working on recently, it should be obvious why I've been forced to deal with this unfortunate reality of Rust: Those projects have been using Rust to safely implement languages in the "reachability" camp.

It's a bit of a meme at this point that it's hard to build linked lists in Rust. Here's a cyclical linked list in Lua:

-- An example of a cyclical linked list in Lua

local a = {}

local b = {}

local c = {}

-- You can just like... set the `next` pointer? And that's it?

a.next = b

b.next = c

c.next = a

-- Now our nodes look like this. Easy!

--

-- [a] -> [b] -> [c]

-- ^ |

-- | |

-- +-------------+This is done trivially, and cycles like this are pervasive in real Lua code (and Python, and Ruby, and Javascript, and even Java, and...), even if it's almost never literally a linked list. So, how can Rust possibly handle this? Somewhere, in a Rust interpreter for such a "reachability" language, you must be able to represent this data structure as some inner Rust data structure.

Given what I've said, this should be impossible to do safely. And, at least if

you want to ever delete any unreachable pointer, then doing this using normal

&'a T is (in the general case), impossible to do soundly. We will cover ways

in which we can do this using other kinds of special pointers and evil tricks

later.

For now, we certainly know from just looking at crates.io that there

exists Rust code that can handle cyclic graphs structures, and certainly

the vast majority of it does not use advanced lifetime tricks or

bacon-rajan cyclic Rc to

accomplish this. Let's first discuss the (by far) most common way to handle this

problem in Rust...

Rust is actually just really good at Vec

Normally, at some point when you first learn Rust, you will run into a problem that seems to require a cyclic reference and you will inevitably get a borrow check error. Almost nobody runs into this with academic examples like linked lists (unless they're learning by implementing those specifically), usually it's actually something that can be solved in a different (sometimes better, sometimes just different, sometimes a little more irritating) way and then you move on until you internalize Rust's ownership system a bit better.

Eventually however, you will run into a problem that just plain requires some kind of cyclic reference. Sometimes there just isn't a way to always know what a "back reference" is from context, or objects are in a truly unstructured cyclic graph and have no obvious ownership relation, and there are no other options.

Eventually you will figure out (or someone will tell you) that the proper way

to solve this problem is by encoding your graph using a Vec with indexes. This

is honestly a great solution, especially when the problem is otherwise simple!

If all of your "nodes" can be a single Rust type, and you just need to sort

of build a graph structure and not really do a lot of small, dynamic inserts

and deletes, then you can build your graph by pushing nodes onto a Vec, and

use their indexes into the Vec as your "pointer", and that's it! The only

real change is having to pipe through a "pointer context" of sorts (access to

the underling storage vector) and the syntactic change from node.field to

nodes[node_idx].field.

Let's look at a very small worked example of this, with just a bit of a helper API...

For simplicity in this section, this is only going to cover the allocation of a single type. This can be pretty straightforwardly extended to an allocation of multiple types by using something like

HashMap<TypeId, Box<dyn Any>>and downcasting.

// An allocator for slots in a vec.

struct SlotAlloc<T> {

slots: Vec<T>,

}

impl<T> SlotAlloc<T> {

// Allocate a new slot, push it onto our `slots` vec, return its index!

// Picture of simplicity.

fn alloc(&mut self, value: T) -> usize { ... }

// Get a value by an allocated index.

fn get(&self, index: usize) -> &T { ... }

fn get_mut(&mut self, index: usize) -> &mut T { ... }

}When we use Vec this way, I would posit that we use it much more like an

allocator than an array. We don't really care what the actual value is

returned by SlotAlloc::alloc is in the same way we don't usually care about

the exact address returned by an allocator.[11]Yeah yeah processors

love memory locality and there's caching and prefetching and all that, we

all know, that's not what I'm talking about <3

If we scrambled the

locations of all of the slots and updated the index "pointers", any algorithm we

run would not meaningfully change.

This is either going to sound pointless or painfully obvious[12]If

it is, I at least hope I'm going somewhere you don't already expect

(or both), but I would like you to think of this technique like this: pointers

(usize indexes) with some kind of context (the owning SlotAlloc). Thinking

about things in this way helps us evaluate it in comparison to other kinds of

pointers like safe builtin references, raw pointers, etc.

First, the good parts... For one, nothing beats usize for simplicity! It's

Copy, you can serialize it, since we're using "segmented memory", you can

choose native pointer sizes (usize) or shrink the address space (u32 /

u16). Also crucially, unlike a real reference, usize will never be !Send

even if the referent isn't Sync, which makes the entire container able to be

Send when any use of real references might not allow this![13]This

one is important and will come up again later! If you want, start thinking about

why this is true very carefully.

This one can be almost unsolvable

in a safe way and even force you into an index-based strategy for soundness.

Okay now for the bad parts. First, there are some annoyances in comparison to normal pointers.

All of the bad qualities of our "pointers" are extremely similar to problems

that arise using real machine pointers. First, like all allocation strategies,

basically ANYTHING will work if you simply never actually free!. Extending our

SlotAlloc to be able to free entries is simple enough, we just need to replace

our slot type by Option<T> and keep a list of free slots...

// An allocator for slots in a vec.

struct SlotAlloc<T> {

slots: Vec<Option<T>>,

free_list: Vec<usize>,

}

impl<T> SlotAlloc<T> {

// Allocate a new value. If we have any known free indexes in `free_list`,

// use them. Otherwise, push a new value, either way, return the index of

// the assigned slot.

fn alloc(&mut self, value: T) -> usize { ... }

// Set this slot to `None` and push the slot onto `free_list`.

fn free(&mut self, index: usize) { ... }

// Get a value by an allocated index. If we are given an `index` that has

// been freed, these will panic!

fn get(&self, index: usize) -> &T { ... }

fn get_mut(&mut self, index: usize) -> &mut T { ... }

}But, besides not actually automatically freeing anything (obviously), "freeing"

our "pointers" can lead to classic use after free bugs! If you are careless and

accidentally free an index then try to access the freed index, this will lead to

invalid use of freed memory (unwrapping a None). Now, in this case you get a

deterministic panic, which is a billion times better than UB, but still, it is

much less useful than automatically managing memory.

There is a second problem, known as the ABA problem. In our pointer analogy

this is another case of "use after free", but it is much worse than a panic.

If you accidentally free an index that still has a live reference somewhere,

then allocate another value and it happens to get the same index, accessing the

"dead" index will both succeed and give you an effectively random, valid value

of whatever your T type is. Though unlikely, this could even turn out to be

as bad as something like Heartbleed,

where instead of getting the data you expect, you get some other random data

with no errors whatsoever and then potentially expose that data to the wrong

party.

There's also a third problem, which is that if we have more than one of our

SlotAlloc allocators, we've split our memory up into "segments". Now, this

is not a problem on its face, in fact it's kind of an useful feature to be

able to split our "address space" like this, but it does allow the user to

confuse segments for their pointers. If you have one SlotAlloc instance

and are importing data into another separate SlotAlloc instance and you mix

up which index goes with which instance, you will get either panics if you're

lucky, and our "UB but not really" if you're not.

Let's solve as many of these problems as we can.

First, let's tackle the ABA problem.

There's this pretty well known idea[14]which I actually also talked

about in my 2018 RustConf talk

called

"generational indexes". The idea is pretty simple, we need to keep a separate

counter called a "generation" with every slot in the SlotAlloc structure.

Then, every time an entry is deleted, increment the generation counter for

that slot. The "index" returned on alloc becomes a new type which is a pair of

both the normal slot index, and whatever this "generation" was at the time of

allocation. The whole idea looks something like this...

// An allocator for slots in a vec.

struct SlotAlloc<T> {

slots: Vec<Slot<T>>,

free_list: Vec<usize>,

}

// A slot within a `SlotAlloc`.

struct Slot<T> {

// The `generation` is incremented every time a slot is freed.

generation: u32,

value: Option<T>,

}

// An abstract "index" into a `SlotAlloc`.

#[derive(Copy, Clone)]

struct Index {

// The index into the `slots` vec.

slot: u32,

// The generation at the time of allocation.

generation: u32,

}

impl<T> SlotAlloc<T> {

// Allocate a new value. If we have any known free slots in `free_list`,

// assign our value to that slot, *increment the generation*.

//

// Otherwise, create a new slot at the end of the `slots` vec with a

// `generation` of 0 and set the value.

//

// The returned `Index` will have the assigned `slot`, along with the

// `generation` of that slot.

fn alloc(&mut self, value: T) -> Index { ... }

// Set the slot for this index to `None` and push the slot onto `free_list`.

//

// Panics if `index.generation` is not identical to the current slot

// generation, because this would be a double free!

fn free(&mut self, index: Index) { ... }

// Get a value by an allocated index.

//

// If the value in `index.slot` is freed or if `index.generation` does not

// match the current slot generation, then these methods will panic.

//

// This protects against both direct UAF and also the ABA problem.

fn get(&self, index: Index) -> &T { ... }

fn get_mut(&mut self, index: Index) -> &mut T { ... }

}Unfortunately, there is no simple solution to statically preventing errors

(panics, whatever) when accessing deleted indexes. But, with the generational

solution to the ABA problem, nothing here "feels like" UB by another name

anymore. We either will have a valid Index into our SlotAlloc and get back

the right value, or Index is invalid and we will get a panic instead.

The problem of using an index to access the wrong SlotAlloc[15]"segment", because I am determined to make you sick of this analogy by

the time I am done <3

is just as easily solved. It is simple enough

to assign a unique ID to each SlotAlloc and return that as part of the

Index, and check this ID on access. Now our Index type could look like

this...

struct SlotAlloc {

// Randomly generated ID value.

alloc_id: u32,

...

}

struct Slot<T> { ... }

struct Index {

// The ID of the parent `SlotAlloc`

alloc_id: u32,

...

}

impl<T> SlotAlloc<T> {

// Return a new `SlotAlloc` with a randomized `alloc_id`.

fn new() -> SlotAlloc<T> { ... }

fn alloc(&mut self, value: T) -> Index { ... }

// `free`, `get`, and `get_mut` now all also panic if the `index.alloc_id`

// value does not match, which means they were generated by a different

// `SlotAlloc`.

fn free(&mut self, index: Index) { ... }

fn get(&self, index: Index) -> &T { ... }

fn get_mut(&mut self, index: Index) -> &mut T { ... }

}And, with this, we have pretty much solved the problems listed with our original

SlotAlloc design, at least to the point that every possible error will be

checked at runtime and deterministically panic. We no longer have any of the

"sort of UB" behavior that we were trying to avoid!

We also have seem to made what feels even more like a normal allocator for a

single Rust type? The SlotAlloc really behaves just like an allocator, except

instead of handing back *mut T it hands back Index. We haven't ensured

that errors can't happen, but we did at least ensure that no error can possibly

happen silently. This is kind of cool that you can take "indexes into a Vec"

this far!

So, to summarize, we have a kind of "special pointer" (Index) into a "segment"

(SlotAlloc), which contains some identifier for our segment (alloc_id), the

real pointer into this segment (slot), and a kind of provenance value that

tells which call to alloc generated our "pointer" (generation).

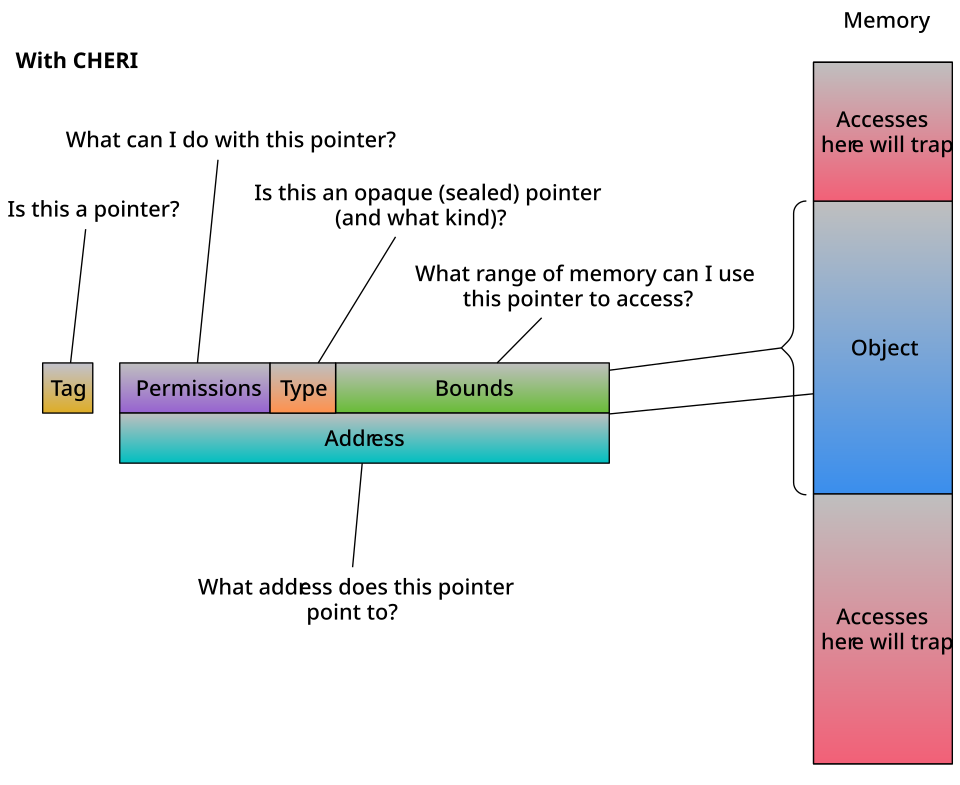

We can just decide on any number of bits of the three pieces, lets decide on a

nice, evocative number of bits for each piece of our TypedIdx and lay them

out in memory like this...

[------arena ID (32 bits)------][-----generation (32 bits)-----]

[------------------------slot (64 bits)------------------------]Please direct your attention to this graphic, from the wikipedia article on CHERI (Capability Hardware Enhanced RISC Instructions):

Pointer problems require Pointer solutions

CHERI is an extremely cool hardware capability system[16]to say I'm not doing it justice in my description here is a ridiculous understatement that prevents UB by marking pointers with metadata that ensures that they are used correctly, and if they are used incorrectly, are guaranteed to "trap" (invoke an error handler).

Now... I understand that saying CHERI is the same as generational indexing

extremely silly...[17]it's hardware acclerated generational

indexing!

but the similarities are kind of suggestive.[18]Please understand I don't actually literally think this. I know CHERI

has hardware enforced validity, bounds checking, can protect against data

races (edit: I guess not?), and has been in research for 15 years. This is a

shitpost.

The point here is that we've built a system that is a lot

like a normal hardware pointer but safe, both in the real UB sense of safe and

also our "inner UB-like behavior" sense, so it makes sense that to do this we'd

be looking over the same landscape of solutions.

The point of this section is to show you that, if you look at it the right way,

the "use Vecs and indexes" solution to (safe) Rust lacking more capable pointers

is remarkably similar to just wholesale reinventing the pointer without the

Rust enforced limitations. I'm not saying this is even a bad thing! Having a

spectrum of compiler enforced correctness vs runtime correctness is a good

thing[19]If CHERI was widely deployed, I could see Rust having a

CheriPtr<T> type that, depending on the platform specifics, could be freely,

cheaply shared and manually freed, guaranteeing to trap instead of cause

UB.

but we need to be clear eyed about it. Any level of this situation

is definitely better than raw pointers and UB[20]From personal

experience, just having generational indexing from something like slotmap can

be a godsend and is a genuinely great place to stop. About 90% of the time, it

works every time.

but it is not a good place for us to all stop looking

for solutions.

But Rust is pretty bad at safe circular references

Okay, so we've seen that a complete pointer-like index solution is viable, but (in many of the same ways as raw pointers) is not ideal. Is there a way to do better?

Well, obviously true Rust references (&'a T) are better than any kind of

Vec index or raw pointer, and are usually even better than any kind of "smart"

pointer, so let's see if it's possible at all to generate a data structure with

actual circular references in Rust.

Surprisingly, it turns out, that it is not only possible but relatively easy!

We'll take inspiration from our example that we used for Lua before and create

a linked list in Rust.

This is what our Node type could look like...

// A simple example of a node in a linked list.

struct Node<'a> {

value: String,

next: Cell<Option<&'a Node<'a>>>,

}

impl<'a> Node<'a> {

fn new(value: String) -> Node<'a> {

Node {

next: Cell::new(None),

value,

}

}

fn next(&self) -> Option<&'a Node<'a>> {

self.next.get()

}

fn set_next(&self, node: Option<&'a Node<'a>>) {

self.next.set(node)

}

}And we can use it like this...

// A bump allocator from the `bumpalo` crate.

let bump = bumpalo::Bump::new();

// Each returned node here is a reference, and that reference has the lifetime

// of the `bump` allocator itself.

let a = bump.alloc(Node::new("1".to_owned()));

let b = bump.alloc(Node::new("2".to_owned()));

let c = bump.alloc(Node::new("3".to_owned()));

// We can thus set the ``next` node pointers to make make the 'a lifetime

// in each of the nodes equal to the lifetime of the references returned by

// `Bump::alloc`.

//

// Since all of the lifetimes of references returned by `Bump::alloc` are the

// same, all of the 'a lifetimes in each `Node` instance become the same, and

// *that* is why this can even work. The fact that this is circular is encoded

// in the fact that Rust has determined all of these lifetimes to be the same.

//

// This *has* to be true, because every node references (either directly or

// indirectly) every other one, so they *must* live for exactly the same time.

a.set_next(Some(b));

b.set_next(Some(c));

c.set_next(Some(a));So, if this is so easy, what exactly is this blog post even about?

Well, this solution has a few problems. The first, obvious one is

that since we use lifetimes in our node types that end up borrowing

bump, you can't easily move the entire set of bump, a, b,

and c anywhere, but this is not unsolvable. You can use things like

self_cell to move the allocator

along with the things it has allocated.

There is a much bigger problem though, and that is, in order to maintain

soundness, none of these Nodes can ever be dropped. If you've ever

used bumpalo for any amount of time you will have run into this before,

and this fact is at the very top of the documentation for Bump. Why is this required

for soundness? Well, suppose we had a Drop impl like so...

impl<'a> Drop for Node<'a> {

fn drop(&mut self) {

println!("dropping node with value {:?}", self.value);

if let Some(next) = self.next() {

println!("next value {:?}", next.value);

}

}

}Since all of our nodes a, b, and c have next pointers set, all of their

Drop impls would print their own value and their next value. However, what

order should a, b, and c be dropped in? No matter what order we pick,

there is not a valid order that wouldn't have UB! If you drop a first, then

the owned String value in a will be dropped, and then the drop impl for b

and c will be able to access it for UAF, and the same thing for any other node

that you pick first.

This is obvious once you think about it, and this is THE reason why bumpalo

cannot offer such an API. In fact, here's an equivalent way to build the same

linked list without bumpalo at all.

let a = Box::leak(Box::new(Node::new("1".to_owned())));

let b = Box::leak(Box::new(Node::new("2".to_owned())));

let c = Box::leak(Box::new(Node::new("3".to_owned())));Obviously this also works because all of the returned allocations have the same

lifetime, it's just that this lifetime is now 'static!

Why doesn't this drop problem show up with Vec-based solutions? Well, the answer

is pretty obvious, we didn't store real pointers, we stored "pointers" that

were only useful when we had access to their "context" type SlotAlloc. Since

we don't have access to SlotAlloc by the construction of the Drop trait,

this problem just never came up!

Maybe we can manually delete nodes like we can with SlotAlloc?

Well as it stands, I believe without MaybeDangling it is

actually impossible to ever manually drop any Node without UB (because there

is no viable order to do so). If you wrap references in some kind of wrapper,

either with MaybeDangling or just internal raw pointers, there might be a

solution to allow removing nodes, but it is not clear at all how to enable this

with a safe interface or to ensure that only unreachable nodes are dropped.

It gets even worse though! Cell<T> is Send assuming that T is Send.

There's nothing at all unsafe about sending a Cell<T> to another thread, what

would be unsafe is sending a &Cell<T> to another thread, since Cell itself

has no thread safety for updates, which is why Cell<_> is always !Sync.

Well, unfortunately, that makes our Node type above also !Send, since it has

references to other Nodes in it, which makes the entire thing also !Send.

struct RefNode<'a> {

value: String,

// RefNode can't be `Sync` because of `Cell<T>`, but that makes `&'a

// RefNode<'a>` `!Send`, so `RefNode` can't be `Send` either.

next: Cell<Option<&'a Node<'a>>>,

}

struct IdxNode {

value: String,

// If instead of using raw references we use an index, this problem

// completely goes away. `IdxNode` is `Send`, and any network of them is

// also `Send`.

next: Cell<Option<NodeIdx>>,

}Now even though this attempt has a lot of limitations, it has taught us an important hint for any eventual better solution...

In any set of circular references, all of those references must have the same lifetime.

It's actually a promising sign that this is even directly possible in Rust and

that Rust seems like it can at least handle this case where a graph of objects

are all non-'static but need to have the same lifetime. Is there anything we

can do about the limitations? Our indexing solution didn't have these problems,

let's back up and think about the indexing solution again to see if we can't get

any inspiration there.

Generativity, or for<'a> is the coolest feature in Rust

So our indexing solution actually had QUITE a lot of advantages! The main

disadvantage, besides potential performance issues, is that indexes are only

checked at runtime rather than compile time. This is not unusual, normally

when writing any kind of Rust code that indexes into a slice, indexes are

checked at runtime to ensure safety, so this may not seem like an issue, but we

are using indexes as a kind of pointer... it is certainly not the case that

&'a T or Rc<T> or Arc<T> have to be checked to be dangling at runtime!

So, I said that it is normal for indexes into a Vec to have runtime checking, and this is true, but it doesn't have to be true. There is actually a technique in Rust to allow for, among other things, safe indexing without bounds checking! It uses a kind of type property called "generativity", and it was first described by Gankra in her master's thesis that Rust actually has such types, sort of by accident!

"Generativity" of a type is a property that makes it a sort of limited kind of dependent type: means that every time a bit of code is executed at runtime, it is as though a brand new type is generated at that location in code at runtime, and the generated type will be distinct from any other generated type created by execution of the same code.

Now this is literally not what is occurring, there is no notion of types at runtime in a Rust program, but it is still possible to have this property in Rust using lifetimes and the borrow checker! By specifying a HRTB (Higher Ranked Trait Bound) on a function parameter, we can declare that this function must work for any possible lifetime like this:

fn generate_a_lifetime(f: impl for<'a> Fn(&'a ())) {

f(&());

}Now this is actually fairly normal in Rust code, but it is already close to

what we want. The Rust borrow checker, by policy, does not cross function

boundaries. So, within the body of the provided callback, the borrow checker

has minimal knowledge of what 'a actually is, since we have declared that our

callback function must work for any possible 'a. It is as though when f

is called, it is given a brand new, totally unique lifetime a for that call,

which is exactly the property we want! In fact this would actually be a complete

example of generativity if it were not for the fact that Rust lifetimes have

subtyping. For example...

generate_a_lifetime(|a| {

generate_a_lifetime(|mut b| {

// `a` has a type like `&'a ()` and `b` has a type like `&'b ()`, and

// we want the 'a and 'b to be two totally distinct types, yet this will

// type check! The problem is that the type `&'a ()` is *covariant* over

// the lifetime 'a, so it can be *shortened*.

b = a;

});

})So, if we can get rid of Rust's lifetime subtyping, then we can give types

using those lifetimes perfect generativity. It turns out we absolutely can do

that by making a type that is invariant over the lifetime 'a.

// Any mutable type is always invariant over the data it holds, so `Cell<T>` is

// invariant over `T`. If we use a lifetime 'a in `T`, the resulting type will

// always be invariant over 'a, and so will not have any subtyping relationship

// with any different lifetime.

type Id<'id> = PhantomData<Cell<&'id ()>>;

fn generate_an_id(f: impl for<'a> Fn(Id<'a>)) {

// This is to point out that it doesn't actually *matter* what the

// "real" lifetime is here for the `Id` we pass in. Safety comes from the

// *guaranteed lack of knowledge* that the callback has, due to the borrow

// checker never using information from across function boundaries.

let id: Id<'static> = PhantomData;

f(id);

}

generate_an_id(|mut a| {

generate_an_id(|mut b| {

// Neither of these statements will work, the lifetime 'a in `Id<'a>`

// cannot be lengthened or shortened to unify with any other. This is

// *exactly* the property we want! As a nice side effect, it is also now

// impossible to place `Id<'a>` in something like thread local storage,

// so we additionally know that our `Id<'a>` type cannot *escape* the

// callback.

//

// a = b;

// b = a;

});

});And success, we now have a type Id<'a> that has this generativity property!

So, what does this buy us? Well, you can read more in the paper, but the

essential ability that this gives Rust is that it allows for creating a copyable

Id, then at a later time, verifying that a given Id is a copy of the

original Id at compile time. This can be used to provide totally safe

indexing without bounds checks. Let's look at an example[21]copied

almost verbatim from the linked paper

...

// Invariant branding ID.

type Id<'id> = PhantomData<Cell<&'id ()>>;

// A wrapper around a slice, combined with a unique type `Id`.

struct Array<'arr, 'id, T> {

array: &'arr [T],

_id: Id<'id>,

}

// A *trusted* in-bounds index to an `Array` with the same `Id`.

struct Index<'id> {

idx: usize,

_id: Id<'id>,

}

impl<'arr, 'id, T> Array<'arr, 'id, T> {

fn len(&self) -> usize { ... }

// Return an `Index<'id>` if `idx` is < `self.len()`.

fn verify(&self, idx: usize) -> Option<Index<'id>> { ... }

// This method does not need bounds checking internally! As long as we know

// that an `Index` with the correct `Id` can only be generated by calling

// `Self::verify` on *this* instance, we know that it must be within the

// bounds of our inner slice.

fn get(&self, idx: Index<'id>) -> &T { ... }

}

// Create an `Array` out of a slice and visit it in a checked indexing "context".

fn make_array<'arr, T>(

array: &'arr [T],

f: for<'id> impl FnOnce(Array<'arr, 'id, T>)

) { ... }And this is how we use it...

make_array(&[1, 2, 3, 4, 5], |array| {

// Verifying a raw index requires a bounds check.

let idx = array.verify(4).unwrap();

// But using that index does not! Because of our `Id` type, we know that the

// `idx` value *must* have come from the `array` that created it.

dbg!(array.get(idx));

make_array(&[1, 2, 3], |array2| {

// This will not type check, because the `Id` in `array` is not the same

// as the `Id` in `array2`.

//

// let _ = array2.get(idx);

})

});Obviously in this simple example this is a little silly, but you could imagine using this technique in interesting ways that would actually be useful, in fact there's even a crate for it. But what if we used this technique to add compile time safety to our indexing system from before?

So to recap, our original indexing system had two main sources of correctness

problems at runtime: 1) use after free / the ABA problem, and 2) confusion

of indexes between different arenas. It's pretty obvious how generativity can

be used to prevent arena confusion, at least on the surface: an Id type can

"brand" Index, and then only Indexes returned from the correct SlotAlloc

can be used to index into that SlotAlloc. This is almost the same as the

example above (if you completely skip over the complexity of storing NodeIdx

outside of the callback, which we'll get to).

So that's one potential correctness issue checked at compile time, what about

the other one? Well, our problem statement was to find a solution to circular

references, but really the way that languages that allow this as part of

their runtime handle this is through determining reachability. If we allow

for manual deletion, then it's not really possible to avoid manual checks, at

least not in any way that will help[22]We could scan the entire graph

for validity or something but then why do manual checks?

. If we were

somehow able to automatically determine reachability and delete nodes that

are no longer reachable, then (at least, outside of the code that does this)

we could even make it impossible to observe deleted Indexes! We could even get

rid of generational indexing entirely!

We have hardly proved that this is possible, but it is a very promising lead! In the next section, we are going to flesh this idea out completely and build a working system to handle circular references, complete with automatic deletion of unreachable references.

Dealing with "reachability" via tracing

Let's digress from our exploration of the larger solution space and dig into a

precise solution for everything we've been talking about so far, complete with,

yes, actual tracing garbage collection. We'll start sketching out a garbage

collection system which internally uses indexes and starts from a similar place

to our SlotAlloc example from before.

Just like in the section on

SlotAlloc, we'll be sticking to a single type for simplicity. In this case, it is not obvious at all how to extend this design to multiple types, so you'll just have to trust me that it is possible!

We have a lot of decisions to make, but based on what we've decided so far, we

should be able to at least figure out what our equivalent to Index should

look like. We'll call them what we hope will be a more appropriate name once

everything is done, Gc. We'll also use our Id type from above...

// Invariant branding ID.

type Id<'gc> = PhantomData<Cell<&'gc ()>>;

// A "pointer" into an `Arena` based on sound, unchecked indexing.

#[derive(Copy, Clone)]

struct Gc<'gc> {

// Let's call our shot, we should need neither an arena ID nor a generation!

index: usize,

_id: Id<'gc>,

}We'll also pick the same storage solution as our first SlotAlloc attempt, the

absolute simplest, bare minimum.

struct Arena {

slots: Vec<Option<T>>,

free_list: Vec<usize>,

}Okay so far so good, but we still have to figure out what the interface should

look like. Let's add an accessor that passes in a type with the branding Id

and a few methods on it...

type Id<'gc> = ...;

struct Gc<'gc> { ... }

struct Arena<T> {

// Our `ArenaCtx` is what actually will hold all of the data.

ctx: ArenaCtx<'static, T>,

}

impl<T> Arena<T> {

// This is kind of like our `make_array` function from the checked indexing

// example. Inside, we have a "context", represented by `ArenaCtx`, which

// will be unique for every call to this method.

fn enter<F, R>(&mut self, f: F) -> R

where F: for<'gc> FnOnce(&mut ArenaCtx<'gc, T>) -> R

{

// Just like in the checked indexing example, the specific lifetime 'gc

// doesn't actually matter, only the lack of knowledge.

f(&mut self.ctx)

}

}

// A handle passed in via `Arena::enter` that allows accessing the arena.

struct ArenaCtx<'gc, T> {

slots: Vec<Option<T>>,

free_list: Vec<usize>,

_id: Id<'gc>,

}

impl<'gc, T> ArenaCtx<'gc, T> {

// Allocate a new value and return the `Gc` index.

fn alloc(&mut self, value: T) -> Gc<'gc> { ... }

// Access a value by its index. We are *sure* that any `Gc` passed in here

// MUST have been retured from `ArenaCtx::alloc` from *the same* instance

// due to our branding `Id` type! It should be impossible to use these in a

// way that causes a panic, checked at compile time!

fn get(&self, gc: Gc<'gc>) -> &T { ... }

fn get_mut(&mut self, gc: Gc<'gc>) -> &mut T { ... }

}So this actually works, however in practice this feels pretty incomplete. The

most major problem is that the entire design makes sure that Gc<'gc> indexes

can't escape from a call to Arena::enter, because just like the checked

indexing example, Arena::enter is what is used to define the branding Id

type which we are using to ensure correctness. If we can't get anything into

or out of Arena::enter, then really each call is totally independent and this

really isn't as useful as we'd like.

Another problem is our T type. We pick our concrete T type from the outside,

let's say we wanted to use this for our linked list example...

// A linked list node containing a `Gc` index.

struct Node<'gc> {

next: Cell<Option<Gc<'gc>>>,

value: String,

}

// We can't really create an `Arena` type like this because `Arena` can't

// actually allocate anything that contains `Gc` pointers. All of those `Gc`

// pointers will be "branded", and this branding comes from `Arena::enter`, but

// we're not inside it yet. We can only use this to allocate values that don't

// contain `Gc` indexes at all, but that's not very useful!

type NodeArena = Arena<Node<'_>>;It really doesn't work as is, because whatever T we pick can't possibly hold

any Gc values. Is there any solution to these?

Let's tackle the second solution first. If we can somehow come up with a

'static version of Node called something like... ArenaNode, and this

type can produce a Node<'gc> for the branding 'gc lifetime, then we can at

least define whatever the T in Arena<T> is. We'll make a trait for this just

so we're not writing code for only a concrete type, since that's not helpful,

and it turns out we can use GATs (Generic Associated Types) to "produce" our

non-'static type.

// We will need an implementation of this type for whatever we want our `Arena`

// to store.

trait ArenaType {

// We can use GATs! If we ever need to reference a type with the right

// brand, we can just reference this associated type and give it the brand

// lifetime we want.

type Value<'gc>;

}So lets try to change our Arena type from above to use our new trait.

type Id<'gc> = ...;

struct Gc<'gc> { ... }

// Okay, so rather than taking a `T` type parameter, we know we will need to

// take an `A: ArenaType` instead.

struct Arena<A: ArenaType> {

ctx: ArenaCtx<'static, A>,

}

struct ArenaCtx<'gc, A: ArenaType> {

slots: Vec<Option<A::Value<'gc>>>,

free_list: Vec<usize>,

_id: Id<'gc>,

}

// And then we just... update all of our methods to take `A` instead. This

// seems like it actually does work!

impl<A: ArenaType> Arena<A> {

fn enter<F, R>(&mut self, f: F) -> R

where F: for<'gc> FnOnce(&mut ArenaCtx<'gc, A>) -> R

{

f(&mut self.ctx)

}

}

impl<'gc, A: ArenaType> ArenaCtx<'gc, A> {

fn alloc(&mut self, value: A::Value<'gc>) -> Gc<'gc> { ... }

fn get(&self, gc: Gc<'gc>) -> &A::Value<'gc> { ... }

fn get_mut(&mut self, gc: Gc<'gc>) -> &mut A::Value<'gc> { ... }

}And then we have to do a bit of boilerplate, but it should be possible to use

this for our Node<'gc> type now.

struct Node<'gc> { ... }

// We'll use an empty type to act as our proxy to implement `ArenaType`. We

// could actually also just implement `ArenaType` for Node<'static> to be cute,

// but this is a little clearer.

struct ArenaNode;

impl ArenaType for ArenaNode {

// The magic, we can now produce our real `Node<'gc>` type.

type Value<'gc> = Node<'gc>;

}

// And now we can finally define a type for our arena of nodes!

type ArenaOfNodes = Arena<ArenaNode>;So this actually works! We can now use our Arena to allocate nodes, at least

within the body of Arena::enter, and we are perfectly able to allocate types

which themselves contain branded Ids.

It's extremely interesting that we can just use a 'static in the type of

slots in Arena and everything still works out. We're lucky that the checked

indexing system can actually use any real lifetime for Id, as long as there

is the right kind of callback boundary with a for<'gc>!

We still have to tackle storing some kind of pointer to values outside of

Arena::enter, otherwise our arena still isn't very useful. We have a promising

lead though, which is that we are now able to refer to types "inside" the

arena (Node<'gc>) with a 'static (ArenaNode).

Really, the meaningful part of Gc<'gc> types is just the index, we could

just... provide a way of getting the raw index from a Gc and producing a Gc

from a raw index?

impl<'gc, A: ArenaType> ArenaCtx<'gc, A> {

fn alloc(&mut self, value: A::Value<'gc>) -> Gc<'gc> { ... }

fn get(&self, gc: Gc<'gc>) -> &A::Value<'gc> { ... }

fn get_mut(&mut self, gc: Gc<'gc>) -> &mut A::Value<'gc> { ... }

// Return the plain index out of a branded `Gc` index

fn gc_to_index(self, gc: Gc<'gc>) -> usize { ... }

// Turn a plain index back into a branded `Gc` index.

//

// If the `index` does not refer to a valid, allocated value, then return

// `None`.

fn index_to_gc(self, index: usize) -> Option<Gc<'gc>> { ... }

}That actually does work! Let's see how this might be finally used to create our linked list...

struct Node<'gc> { ... }

impl ArenaType for ArenaNode { ... }

impl<'gc> Node<'gc> {

fn new(value: String) -> Self { ... }

fn next(&self) -> Option<Gc<'gc>> { ... }

fn set_next(&self, next: Option<Gc<'gc>>) { ... }

}

// We can use it like this...

let arena = Arena::<ArenaNode>::new();

// We pick a "root" value of the `a` node.

let a_root: usize = arena.enter(|ctx| {

let a = ctx.alloc(Node::new("1".to_owned()));

let b = ctx.alloc(Node::new("2".to_owned()));

let c = ctx.alloc(Node::new("3".to_owned()));

a.set_next(Some(b));

b.set_next(Some(c));

c.set_next(Some(a));

ctx.gc_to_index(a)

});

// Now, even after we exit the arena, we can re-enter it and get back our

// `root`!

arena.enter(|ctx| {

let a = ctx.index_to_gc(a_root).unwrap();

let b = a.next().unwrap();

let c = b.next().unwrap();

});It's a little depressing that we're back to passing around plain usize indexes

(which we can get wrong), but at least the structure inside doesn't have

fallible indexes! This is actually a big improvement, especially if the number

of "roots" that we need outside of our arena is relatively small, like, for

example, the single root node of a graph.

We haven't finished yet, but we're in the home stretch. We need one more thing to make this complete: to actually have tracing garbage collection!

What we'd like to do here is is something like this, from the outside of the

Arena...

impl<A: ArenaType> Arena<A> {

// Magically, somehow, remove every unreachable value.

//

// What makes a value reachable? Well, we have to pick what we mean by that

// by specifying our *root set*, and what we'd like is to remove everything

// not reachable from this root set. Let's just pass all the roots in!

fn collect(&mut self, roots: impl IntoIterator<Item = usize>) { ... }

}Which is a pretty simple API!. It should be obvious that to do this we need to

know something about our held values, how else could the Arena can't know

that you can reach b from a and c from b? To do this, we'll add a single

method to our ArenaType trait and add one new helper trait.

// Helper trait for tracing held `Gc` pointers

trait Visitor<'gc> {

fn visit(&mut self, gc: Gc<'gc>);

}

trait ArenaType {

type Value<'gc>;

// The implementation of this method should visit every held `Gc<'gc>`

// pointer and call `visitor.visit(gc)` on it.

fn trace<'gc, V: Visitor<'gc>>(this: Self::Value<'gc>, visitor: &mut V);

}Let's see a brief sketch of the rest of the missing pieces...

impl ArenaType for ArenaNode {

type Value<'gc> = Node<'gc>;

fn trace<'gc, V: Visitor<'gc>>(this: Node<'gc>, trace: &mut V) {

// This is all our user code needs to do!

if let Some(next) = self.next.get() {

visitor.visit(next);

}

}

}

impl<A: ArenaType> Arena<A> {

...

fn collect(&mut self, roots: impl IntoIterator<Item = usize>) {

let queue: Vec<usize> = roots.into_iter().collect();

let visited: HashSet<usize> = HashSet::new();

// We won't work through it in detail here, since what we're doing

// is a very standard "mark-and-sweep", but what we do is start with

// our set of `roots` and call `A::trace` on each one. We'll need an

// implementation of the `Visitor` helper trait, and the helper trait

// should push every `Gc` visited by `A::trace` to our `queue`.

//

// Once a `Gc` is visited, we make sure that we insert its index into

// the `visited` set.

//

// Finally, take every node *not* in the `visited` set and "free" it,

// setting the value to `None` and putting the index into the free list.

// And we're done!

}

}Whew, and that's really it this time! We've made a primitive (if complete!) tracing garbage collection which can be made in safe code!

We have three possible sources of incorrectness left...

- External "roots" are

usizeindexes which can be mixed up, stored incorrectly etc. - We have to pass in our root set explicitly.

- Internally, it should be impossible for any access of a

Gc<'gc>index to panic, assuming that theArenaType::tracemethod is implemented correctly.

But this is really REALLY close to something actually nice! What we've done in

this section is build all the core pieces of a minimum viable gc-arena style

interface to garbage collection. This really is every piece that you need to

understand the core idea of this style of garbage collector... except for one

new wrinkle.

What if we made Gc<'gc> instead store a pointer?

A sketch of a real, raw-pointer based GC

We just need to make a few more changes to our design to reach something that,

at least from the outside, is pretty much feature parity with a collector

like gc-arena.

This is going to start going faster both because the details are messier but also because the internals are not so important.

First, it's pretty terrible both that we have our roots as plain usize AND

that we have to remember to pass the roots into Arena::collect. this is

actually pretty easy to solve using handle types.

struct ArenaRoots<A: ArenaType> {

roots: Vec<Weak<InnerRoot<A>>>,

}

// Instead of `usize`, hand out something that wraps an `Arc`. We can then

// both iterate over the roots in `Arena::collect` without specifically having

// to list them, *and* we can pack some identifier data into the `InnerRoot`

// to make sure that we panic at runtime if a `Root` is used with the wrong

// `Arena`.

struct Root<A: ArenaType>(Arc<InnerRoot<A>>);

impl<A: ArenaType> Arena<A> {

...

// We no longer need to list all of our roots explicitly!

fn collect(&mut self) { ... }

}

impl<'gc, A: ArenaType> ArenaCtx<'gc, A> {

...

fn gc_to_root(self, gc: Gc<'gc>) -> Root<A> { ... }

// Panics if the given `root` was not produced from `gc_to_root` of the

// same *Arena*. It is fine if it is produced by a different call to

// `Arena::enter`, though!

fn root_to_gc(self, root: &Root<A>) -> Option<Gc<'gc>> { ... }

}So that's actually quite easy, and solves at least one of our listed sources of incorrectness. Unfortunately, there's no real way to statically guarantee that the right root is used on the right arena, but this is still valuable! In experience dealing with garbage collection systems like this, there are many more internal pointers than external ones, sometimes there is as little as a single root for a huge number of inner values.

The biggest remaining problem usability wise is that we can only allocate a

single type T. This is actually totally solvable, even safely!

Please read the extremely excellent blog post by fitzgen on how to do garbage collection safely in Rust. His design is more or less a much better version of the design we've sketched out so far, plus multiple allocated types and minus using generativity tricks. He is the one that actually sat and did proof-by-construction that totally safe tracing GC is possible.

But we're not going to try to explain how to do this using raw indexes. Instead,

let's make the biggest change so far to our design, which will finally explain

the real reason we've been doing things this way so far: we're going to change

Gc<'gc> from an index to a pointer. Here's what our new Gc type will

be...

// A simple, transparent wrapper around a raw pointer.

#[derive(Copy, Clone)]

#[repr(transparent)]

struct Gc<'gc, T> {

ptr: NonNull<T>,

_id: Id<'gc>,

}We'll also (without going over the details of how) let our Arena allocate

multiple types. Let's see a full sketch of what the whole API could look like

with this change. We'll need a few drive by changes as well to the ArenaType

trait.

// Our branding type.

type Id<'gc> = ...;

// A simple, transparent wrapper around a raw pointer.

#[derive(Copy, Clone)]

#[repr(transparent)]

struct Gc<'gc, T> {

ptr: NonNull<T>,

_id: Id<'gc>,

}

// Helper trait for tracing.

trait Visitor<'gc> {

fn visit<T: Trace>(&mut self, gc: Gc<'gc, T>);

}

// A new trait! This is exactly the same as the `trace` method from our

// `ArenaType` trait, but now since our `Arena` will store multiple types

// internally, this needs to be separate.

//

// It is also *unsafe*! We want to deal with raw pointers, so if this method

// *misses* any held pointer, we will delete it on collection and it will become

// dangling!

unsafe trait Trace<'gc> {

fn trace<V: Visitor<'gc>>(this: Self::Value<'gc>, visitor: &mut V);

}

// This trait goes back to just being a way to produce a `Value` for any given

// `'gc` lifetime.

trait ArenaType {

// The actual value our arena will store, whatever the 'gc lifetime.

type Value<'gc>: Trace<'gc>;

}

// A "root" handle into some `Arena`. Note that we must use the `ArenaType`

// projection type instead of a bare type with a 'gc lifetime attached.

struct Root<A: ArenaType> { ... }

// Our `Arena` type is now typeless! We can allocate any type within it. We are

// *skipping* what the internal storage looks like, but if you want to see one

// full example, you can look at the `gc-arena` internals.

struct Arena {

ctx: ArenaCtx<'static>,

}

struct ArenaCtx<'gc> {

...

_id: Id<'gc>,

}

impl Arena {

fn enter<F, R>(&mut self, f: F) -> R

where F: for<'gc> FnOnce(&mut ArenaCtx<'gc>) -> R

{ ... }

}

impl<'gc> ArenaCtx<'gc> {

// Typed variants of our functions from before...

// We changed `alloc` to take `&self` instead of `&mut self`, because since

// we're dealing with unsafety now, it's not a big deal to use internally

// mutable allocators (or even just the system allocator).

//

// We've also added a `Send` bound, the reason for this will be explained

// soon.

fn alloc<T: Trace + Send>(&self, value: T) -> Gc<'gc, T> { ... }

// Because of all the work we've done on compile time safety,

// `ArenaCtx::get` and `ArenaCtx::get_mut` should be able to be implemented

// as *raw pointer dererfs with no checks*.

//

// We have to trust that our branding system and our other soundness logic

// works, but if it does, that makes `Gc` *no more expensive than `&T`, and

// that's EXTREMELY cool!

fn get<T>(&self, gc: Gc<'gc, T>) -> &T { ... }

fn get_mut<T>(&mut self, gc: Gc<'gc, T>) -> &mut T { ... }

// These methods are now a bit more funky. Calling `gc_to_root` will always

// require manually naming some *projection* type `A` to make sure we can

// get a "lifetimeless" version of `T`.

fn gc_to_root<A: ArenaType>(self, gc: Gc<'gc, A::Value<'gc>>) -> Root<A> { ... }

fn root_to_gc<A: ArenaType>(self, root: &Root<A>) -> Option<Gc<'gc, A::Value<'gc>>> { ... }

}Whew! So this, finally is the core of the real design of a branded pointer

GC like gc-arena.

In truth, actual

gc-arena's design is both more complex than this and also not quite as good. The design ofgc-arenamakes slightly different tradeoffs for ergonomics and a lot of extra complexity (like write barriers) due to supporting incremental collection.

Without gc-arena's goal of trying to use raw pointers to the maximum extent

possible, it would not have been necessary to take a detour into "generativity".

It's really this idea, using "generativity" to make almost all of the pointer

accesses provably safe, that allows for this design using raw pointers to work.

This design is even slightly better than the current gc-arena design. Let's do

something that will look incredibly spooky, but I promise is sound.

// A simple, transparent wrapper around a raw pointer.

#[derive(Copy, Clone)]

#[repr(transparent)]

struct Gc<'gc, T> {

ptr: NonNull<T>,

_id: Id<'gc>,

}

// Okay, there are no *bounds* on `T`, how can this be sound?

//

// Well, our API is inherited from a different design where `Gc` was a simple

// *index*. Note that we have added nothing like `ops::Deref` to `Gc` since we

// changed it to store a pointer!

//

// We're still *using* the `Gc` value in the same way we would use an index.

// Usize indexes can be Send and Sync because there's nothing you can *do* with

// them without the a Vec to index into, and despite how sketchy this looks,

// this is also true here!

//

// `Gc` is totally inert without calling `ArenaCtx::get` or similar, so as long

// as we properly control what we can do with `Arena` and `ArenaCtx` we can

// safely make `Gc` `Send` and `Sync`!

unsafe impl<'gc, T> Send for Gc<'gc, T> {}

unsafe impl<'gc, T> Sync for Gc<'gc, T> {}So let's use this in our linked list example and show why this is a cool

feature.[23]One that gc-arena is actually missing

. We'll

also show off another feature that comes from gc-arena without going into too

much detail about it: since Trace is such a mechanical trait to implement, we

can write a proc-macro to implement it for us, no longer requiring the user to

write ANY unsafe code!

// We can make a proc-macro that derives `Trace` automatically! As long as we

// have a large set of standard types that also implement `Trace`, like `Option`

// and `Cell`, we can use the same strategy for automatic implementations that

// other similar traits like `Clone` or `Serialize` do, saving us from having to

// write any unsafe code!

#[derive(Trace)]

struct Node<'gc> {

next: Cell<Option<Gc<'gc, Node<'gc>>>>,

value: String,

}

struct ArenaNode;

impl ArenaType for ArenaNode {

type Value<'gc> = Node<'gc>;

}

impl<'gc> Node<'gc> {

fn new(value: String) -> Self { ... }

fn next(&self) -> Option<Gc<'gc, Node<'gc>>> { ... }

fn set_next(&self, next: Option<Gc<'gc, Node<'gc>>>) { ... }

}

let arena = Arena::new();

// We can place values into our arena, like before...

let a_root = arena.enter(|ctx| {

let a = ctx.alloc(Node::new("1".to_owned()));

let b = ctx.alloc(Node::new("2".to_owned()));

let c = ctx.alloc(Node::new("3".to_owned()));

a.set_next(Some(b));

b.set_next(Some(c));

c.set_next(Some(a));

// We can create a root to `a`, but unfortunately we have to name a

// projection type manually.

ctx.gc_to_root::<ArenaNode>(a)

});So now that we have this arena and our a_root, both 'static. Whenever we

want, we can "enter" our arena and get back the non-'static real Node<'gc>

values we've stored, but once we've "exited", the only types we have our

'static! We can move around whole networks of cyclic pointers, neat!

But you know what's even better about this design? Both Arena and Root can

also implement Send!

This is why we worked so hard to keep Gc Send... Cell is Send,

Option is Send, Gc is Send, so our Node value is Send! This is

also why we have to put that extra Send bound on T: Trace + Send in

ArenaCtx::alloc... we haven't talked about what the internal storage of

Arena actually looks like, but internally it must hold something akin to

Box<dyn Trace + Send> if it itself is also Send, so that bound must be

there.

This is the real logic behind this entire design, and if you take away one piece

of this, everything falls apart. Making Gc implement Deref means it can't

implement Send if T is not Sync, which for a T of Node it can't be

because Node contains Cell. This is why Gc acts like an "index", to make

the "jail" that is the Arena able to hold things which are only Send, not

Sync.

Remember our try at using raw circular references from before? This is

fundamentally a more powerful design than that, even if it has a huge number

of moving pieces. It is in fact almost like separate, stranger version of

self-borrowing: like other self-borrowing solutions, everything is inside a box

that is "'static at rest", but even better than that, it's a way to have a

network of safe pointers (like references) that don't have infectious !Send.

This is extremely useful, and pretty unique within the Rust world in the powers it gives you!

Wrapping up -- The Bigger Picture

This design that I have explained is a very cool pattern, but it takes so many really strange steps to get here that I have struggled in the past to explain it in what I feel is the right way.

This time, I've tried a different tack and I'm explaining a design starting

from what Rust is naturally good at, Vec and indexes, and trying to move from

that into a full network of self-references instead based on fast pointers.

Really, gc-arena style GC designs are nothing more than

"Vec + indexing" + "tracing GC" + "checked indexing"

Having statically verified indexes is virtually the same as a safe pointer, the only difference is that there's no pointer offset!

There are also issues that pervade Rust that can have solutions that are at

least the same shape as gc-arena. For example, suppose our linked list was

not circular and could Rc instead of Gc... We know that Rust cannot send

networks of Rc but it's interesting that it apparently can send networks

of Gc?

The idea of "jailing" a set of values, being able to "exit" the jail and do something that is sound as long as you can guarantee only the whole jail can move at once seems to be a somewhat difficult to achieve but compelling feature of Rust.

When I started gc-arena I had an extremely lofty, pretty arrogant goal: to

be able to solve Rust integration with garbage collection. I don't think I've

done that, simply because of the major limitation of gc-arena style designs:

that no garbage collection can take place inside methods like Arena::enter.

I used to think of this as a limitation that more or less made the approach only extremely niche, but I actually no longer think this is quite as true.[24]It's still not for every problem, it's pretty hard to implement a runtime that allows code to run indefinitely and still use this style of GC.

Go find a random "systems programmer" and ask them how they feel about adding a GC to their program and they will almost certainly make a face at you like this:

୧(๑•̀ᗝ•́)૭

Now ask them if they want a thousand GCs in their program and I bet you most of them will react even worse! One garbage collector is awful, a thousand has to be a thousand times worse!

In my opinion, this is fundamentally wrong. Many (but not all! looking at

you safe-gc) of Rust's garbage collector

solutions try to come up with a collector that acts like the kind found in

garbage collected languages, operating on the entire process at once or at

least on an entire system Thread at once.[25]seamlessly integrated

Fundamentally, tracing garbage collection must have a runtime "proportional to the size of the live set", as they say in GC literature. If you have a completely incremental GC this can be okay, but any GC (and this absolutely counts for most state-of-the-art generational GCs) that has to stop-the-world to scan a whole generation WILL pay a cost proportional to the live set in that generation.

You know what language is known for its very low tail latency? Erlang. One of THE primary reasons for this is that it doesn't just have one GC it has many separate GCs, one per Erlang process![26]They're like a lightweight, strictly isolated thread. If one process uses a lot of heap, this will not interrupt other processes, keeping P99 pause time very low.

People often times separate systems programming languages by "not

having a GC", and I think this is totally, completely backwards. GCs are

everywhere, wherever you fall on the debate about whether Rc + Drop

is a GC or not, even tracing GCs are everywhere, like inside the Linux

kernel.

I'm going to make a very strange, maybe controversial statement:

Systems programming languages are languages where you can have any number of garbage collectors at a time.

I think safe interfaces to garbage collection are potentially unavoidably intertwined with safe representations of cyclic pointers in general. I think my dream systems programming language would be able to (SAFELY!) integrate GCs a la carte, and I also think that GCs are sort of a missing data structure for systems programming.

This is achievable in Rust today, as we've shown, but obviously there is still quite a bit of pain to be had to get the benefits. Sometimes, though, provable safety really is worth the pain.

Tracing GC errors are some of the worst kinds of UB bugs you will ever

encounter. They often involve wrongly unreachable objects or missed

"barriers"[27]Part of the complexity of having a concurrent GC

like gc-arena, which is not covered here.

and the crashes can be

completely spatially and temporally separate from wherever the true logic bug

is, only triggered on the next entire GC cycle.

I have (and this is completely true) never had to deal with a GC bug using

gc-arena outside of developing gc-arena itself. I also asked one of the core

Ruffle devs about this, and as far as

they could tell, Ruffle's whole history seems to have had three instances of

this[28]or, this as many as they could find

, one was that

gc-arena did not allocate over-aligned structures properly (which has nothing

to do with garbage collection), and two cases of unsafe impl Collect {}[29]gc-arena's version of the Trace trait is called Collect

that were stale and had missed fields. Now, obviously those last two are

potentially bad and hard to debug, but the point is having to manually implement

Collect in gc-arena is really rare, and that is the reason Ruffle

has not had to spend as much engineering effort tracking as they would have

otherwise!

Being able to integrate safe garbage collection into a systems

programming language, has other benefits too. In Fields of

Mistria for example, the fabricator

VM is already plenty faster than the GMS2 one, but this isn't actually a

live-or-die issue for NPC Studio to begin with. The simple fact that Rust

code can directly and safely access ALL of the internal data structures of

fabricator values and can also directly construct values which are themselves

safely garbage collected is huge! It means that at no point are you trapped

in script code, almost any script code can be replaced by Rust, safely, and

almost even down to the smallest inner loop (modulo the inherent Rust call

overhead, which is pretty low!) rewriting a section of GML in Rust will always

be faster. This pretty rare among scripting language FFI boundaries!

I'm not trying to get you to go out and use any of my crates, this post is not meant to be an advertisement.[30]And frankly, I'm pretty bad at crate maintainership.

What I've tried to do here is explain why I am in such a crazy corner of Rust, how I got here, why I needed to be here, and that I think this corner is actually under-explored. If my talk inspires somebody's interest in this and they can do a better job than me writing garbage collection libraries with this design and they go do that, I'll use their library and be utterly overjoyed.

I think there's a place in Rust, and frankly all systems programming, for more easily integratable "GC as just a fancy data structure", so that you can pick the right algorithm for the right task and not have to deal with a global systems decision that somebody else has made. This is kind of the essence of systems programming!

Thank you for reading!